Dimension CC combines V-Ray rendering with machine learning so anyone can create realistic 3D imagery. Adobe's Zorana Gee and Ross McKegney explain how they launched their new app faster using V-Ray App SDK.

Earlier this year, Adobe product manager Zorana Gee and engineering director Ross McKegney joined Chaos Group’s Chris Nichols and Lon Grohs for the CG Garage Podcast. They talked about how Dimension CC works, bringing it to market with V-Ray App SDK, its smart machine learning, and how it seamlessly fits into the bigger picture of Adobe software.

We've rounded up some highlights of the podcast, and you can listen to the original recording at the bottom of this page.

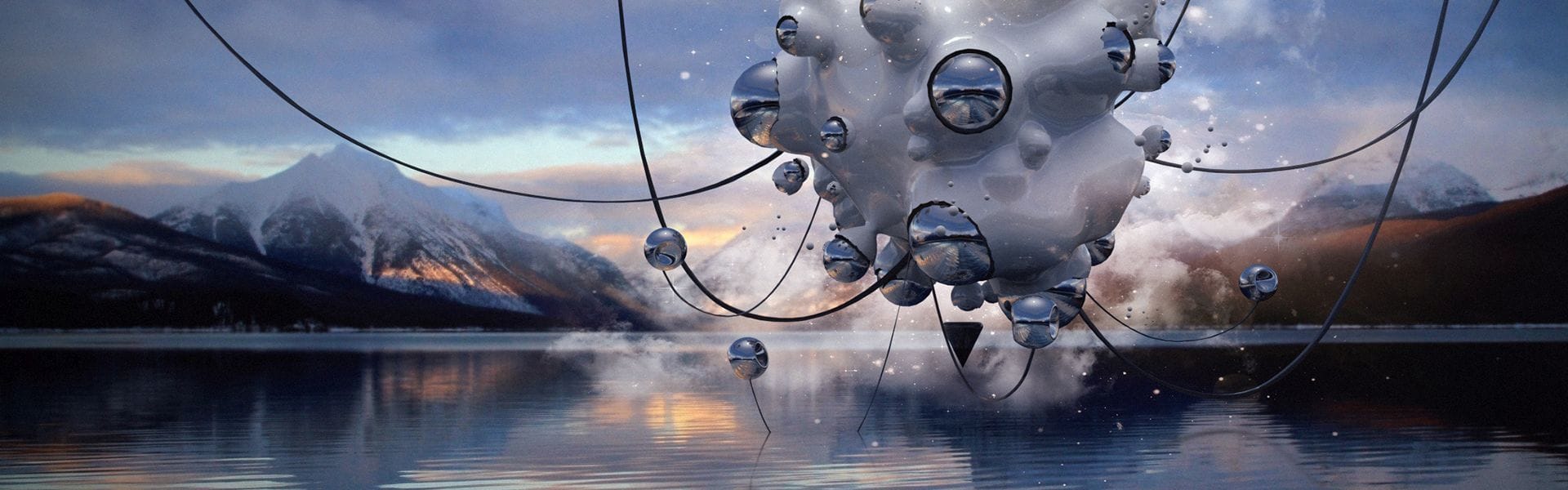

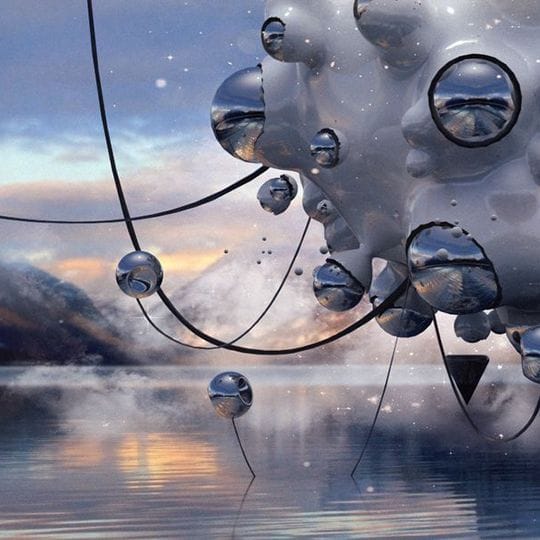

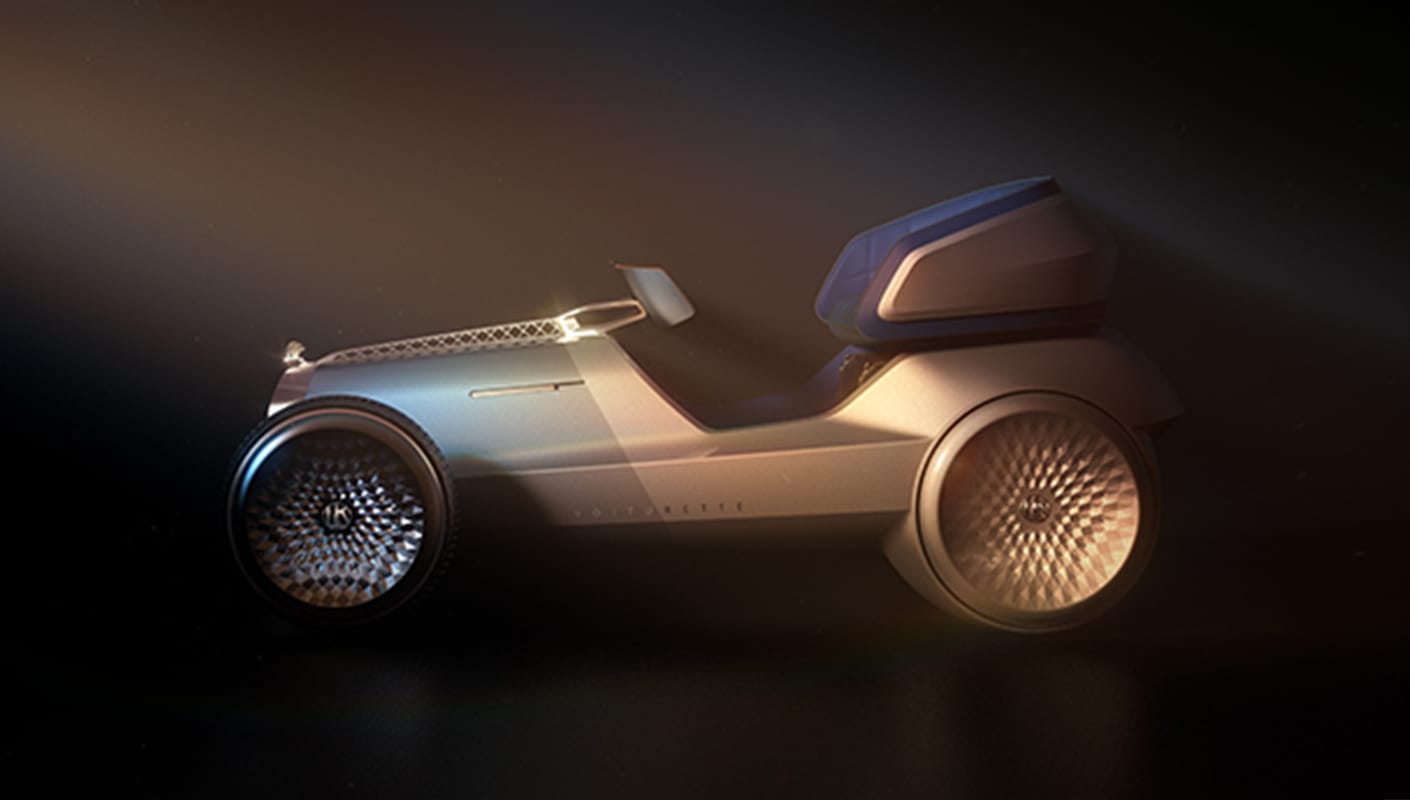

All images courtesy of Cinniature.

Explain a workflow that you would imagine some user might go through.

Zorana: It's really important for a designer to be able to communicate how real a material looks, such as plastic, metal, aluminum. But they also need realism in terms of the context of how a product is going to be sold or used, or how they want to pitch it to a client.

Dimension CC can analyze an image and detect the flat surfaces. How does this work?

Ross: Fundamentally, what it's doing is not algorithm-based, it's machine learning-based. There's no algorithm detecting floors or walls or surfaces, but over time the system learns where those surfaces are. We have a massive number of images that we use to teach an algorithm what a floor looks like. When you have an image that has a grassy field, or a tabletop, it can detect the dominant surface in that image because it's been trained against a large data set. Once it's trained, it knows where the surface is. Then it can place the ground plane to match that surface and place the 3D object on top of it.

For us, it’s the only way to make 3D accessible to our user base. We’re going after Photoshop, Illustrator, After Effects users — the entire Creative Cloud community. You want a tool that any of them can use and feel comfortable with, one that looks and feels like tools they are familiar with. It doesn't impose new complexities of 3D to them, and that's the opportunity we see in opening this platform, and 3D, to the masses.

You guys seem to have taken a philosophy of saying: We're going to make things as simple as possible, but use the full power of V-Ray at the same time, right?

Zorana: It's a professional tool. The power and the quality of what you get from Dimension is definitely for professionals, but the automation or the simplicity aspect is what you see first. And you can always drill down to get into more advanced properties.

Ross: What you just described reminded me of a quote I heard recently: the definition of product management is to lose the fight against complexity as slowly as possible. That's a critical thing. It's got to be easy to use and the result has to look professional. It can't look like it was easy, it has to look really, really good. But that’s so hard. That's why we’ve invested in AI and research. I lay a relentless focus on the product team to make sure we're not overcomplicating the interface, and it's a battle we're continuing to wage.

Where do you see this going from now?

Ross: Interoperability is a big topic for us, and there's a lot of work we're doing to work with Photoshop, Illustrator, After Effects. You'll see more and more features roll out in those areas just to streamline the workflows between those tools. An ideal scenario would be if I can live link my Illustrator and Dimension. Enabling those types of workflows, where it just gets easier and easier to work with the products together, is probably the biggest opportunity for us to grow.

Zorana: The machine learning angle is definitely a critical aspect to our growth as we move forward. We call it Adobe Sensei — one data set to rule them all.

What was it like to work with the Chaos Group guys to get V-Ray App SDK integrated?

Ross: With the App SDK we were able to just plug it in. We had some back and forth around our configuration and working through some issues, but everything was smooth. We're very happy working with Chaos Group.

Zorana: No way we could have gone to market as fast as we did, within a year, without working with Chaos Group.

You're looking for ideas from the users. What are some of the things you're interested in learning about?

Ross: We’re thinking through the workflows of the people you interact with. Are there cases where it will be easier for you to set up a scene and hand off a scene that can be rendered, rather than doing the rendering yourself?

As we’re doing that, we’re always keen to find heat about features or opportunities for us to make the product better. One of the things we're exploring is a cloud rendering solution as a native part of the experience. If anyone would like to try that, it's something that we’ll be kicking the tires on soon.