Arch viz masters, app developers, VR pioneers… Is there anything Brick Visual can’t do?

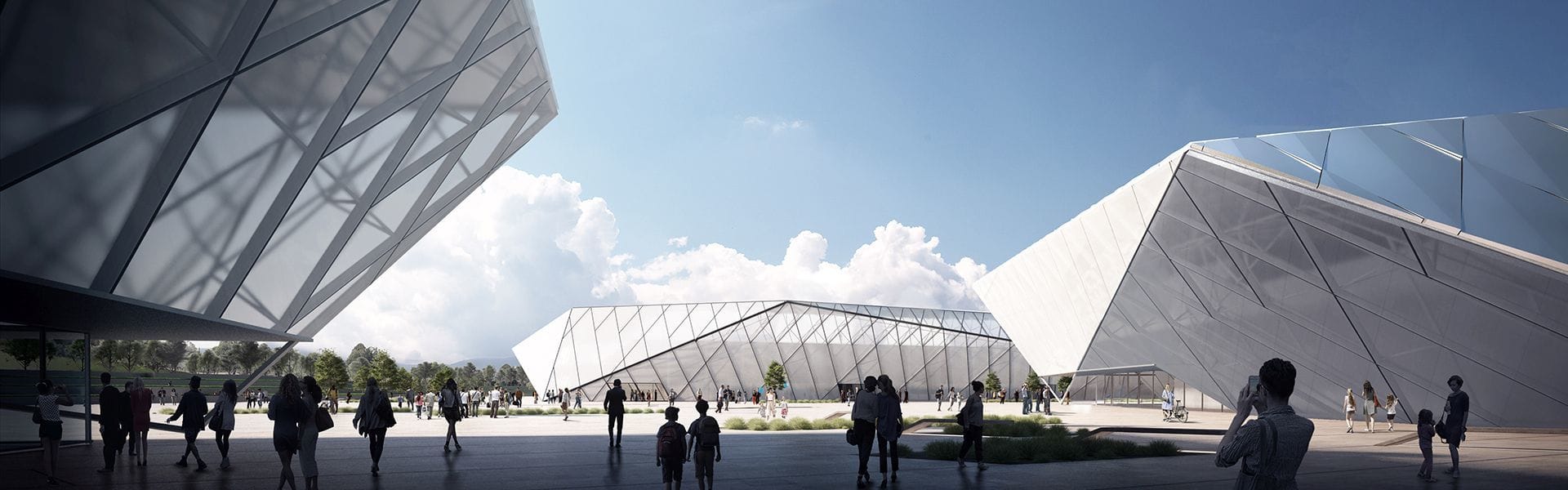

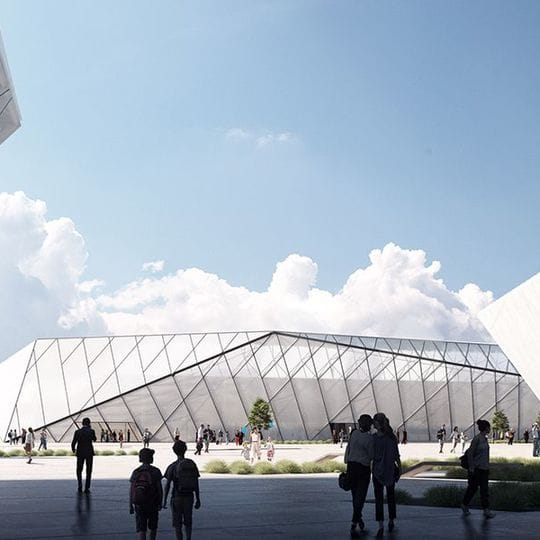

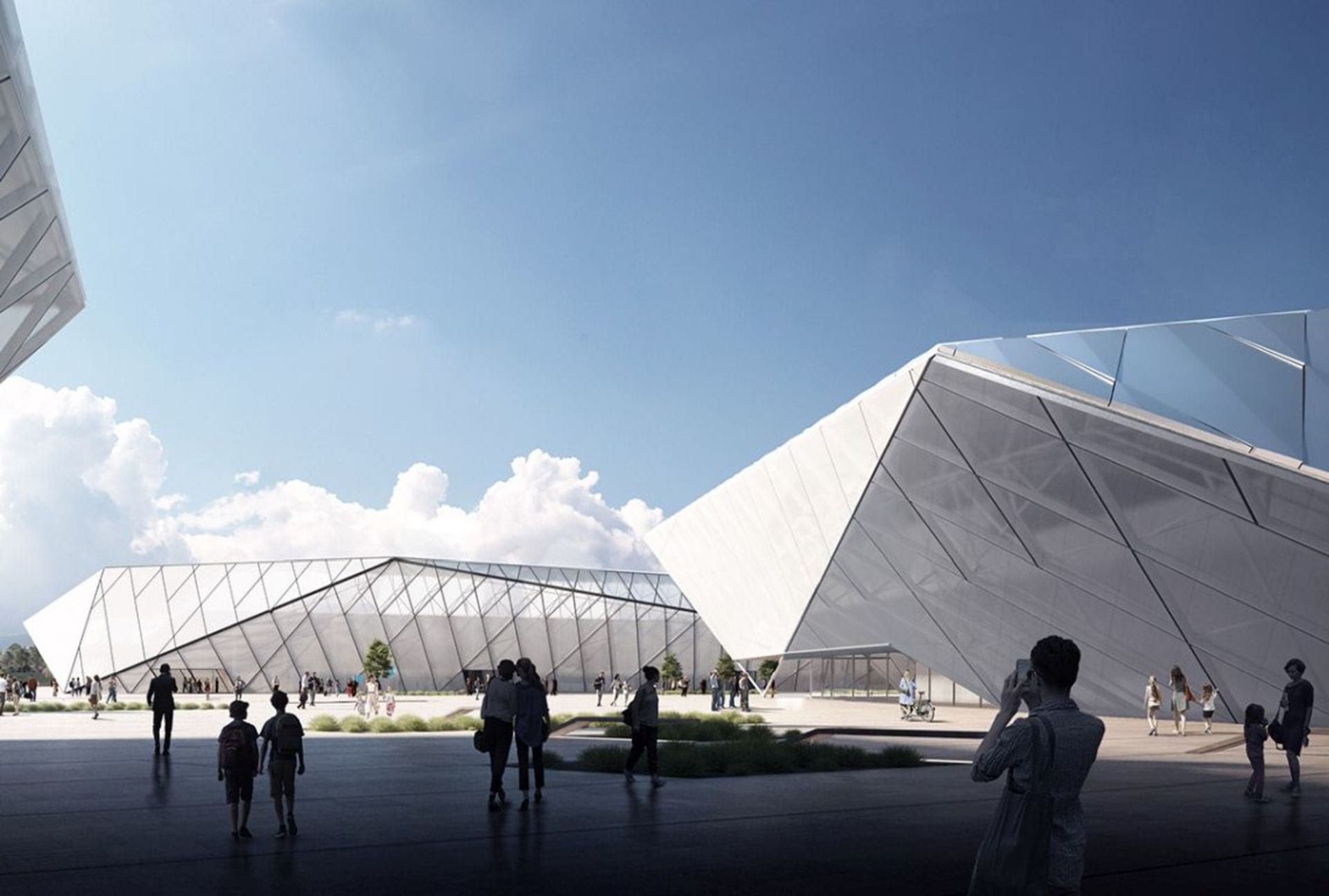

Brick Visual’s co-founders András Káldos (CEO) and Attila Cselovszki (CTO) have applied a Silicon Valley startup mentality to the architectural visualization industry. Founded in Budapest, Hungary 2012, the company produces eye-catching imagery, cinematic animation and innovative motion stills for big names such as Gensler, Snøhetta, and BIG.

Brick Visual also hosts a research and development department which creates internal tools, streamlines its software pipelines, and even builds public-facing mobile apps, which is rare for an arch viz company.

At a time when new technology is dramatically shifting the way arch viz studios operate, it’s more important than ever that the company sits on the cutting edge of innovation. We spoke to András and Attila about how the company is set up, how they work with VR, and the future of the industry.

What makes Brick different to other arch viz companies?

András

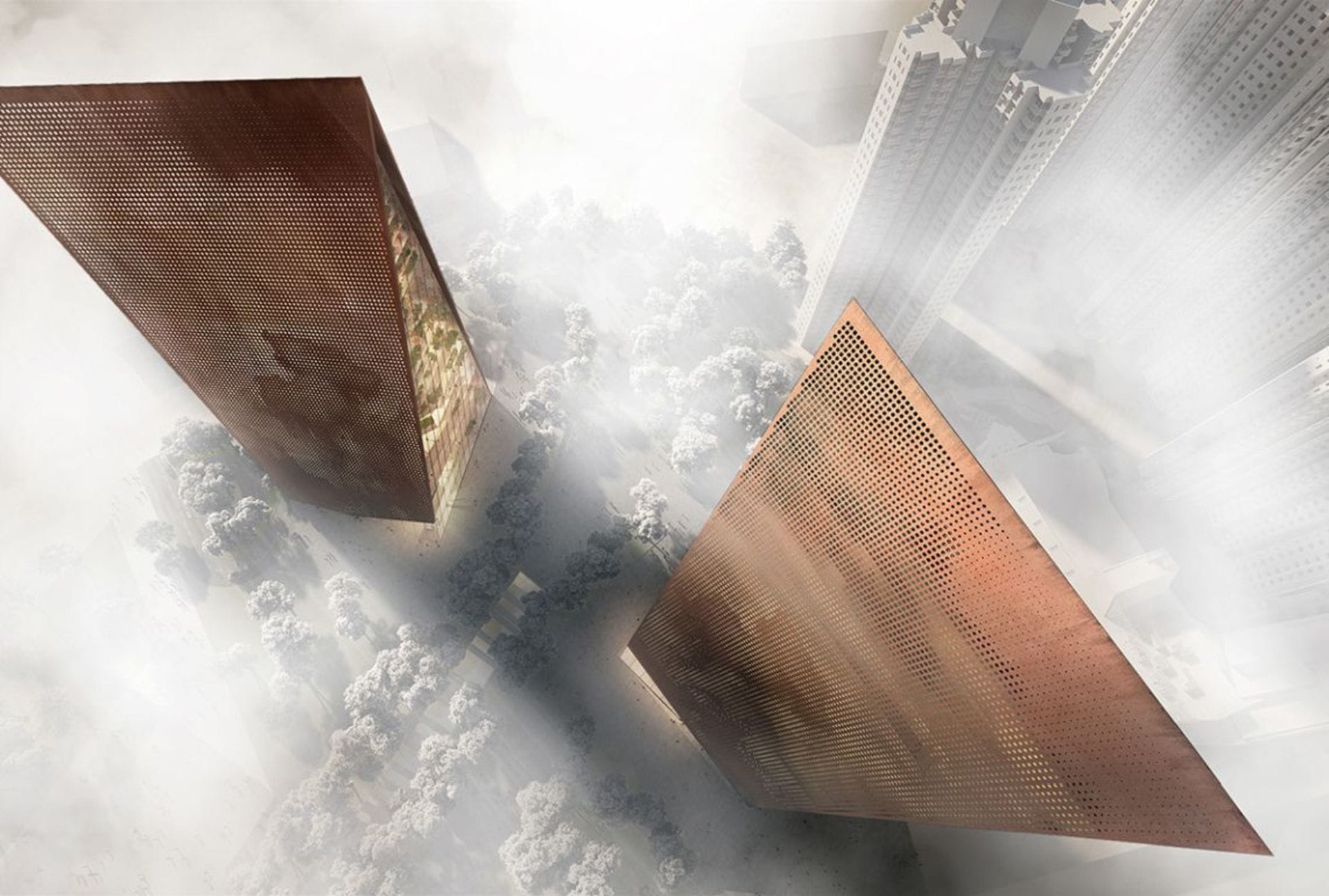

Since we started, we have established a distinctive style inspired by classical paintings and topnotch cinematography. This ”Brick-like" visual language reaches beyond aesthetics. It's about communicating design and architecture in its most captivating and honest way.

Brick is an extraordinarily large arch viz company within its profile. This size is challenging to manage, but it has many advantages. We can take on many projects, we are technologically well-prepared, and there is a very diverse range of know-how among our staff, which we make use of every single day.

Recently, we launched a separate research and development team to make sure Brick is a flagship in the arch viz industry from a technological aspect. Three people constantly work on cutting-edge solutions, such as VR development or arch viz-related programming. Just to give you an idea: this year 10% of our budget goes to this team. That’s a lot.

Could you tell me more about what these developers do?

Attila

Our work can be divided into three main areas:

All starting projects are considered as Service Development. This is primarily the development of the production pipeline. Our goal is to make life easier for our artists, and automate iterative activities to give more space and time to the creative work processes. In addition to this, we support the back-office. From helping IT and finance, to the production of the commercial department’s sales materials, our activity is on a company-wide scale.

Certain projects expand into products, via In-house Product Development. This standalone software is perfected within Brick with the help of over 35 artists.

Some software is released outside of Brick. In this case, we are talking about External Product Development. We are in touch with renowned arch viz companies and world-famous architect offices, who are now integrating our solutions in their pipelines. Our research and development team also handles their support.

Who are your clients, and how do you attract them?

András

Our clients are top architecture studios, who choose us because of our narrative, high-quality visuals and our well-organized, businesslike attitude. It’s important to highlight that we are not just vendors but proactive collaborators. As our team consists of architects we understand our clients, and we think like architects.

As a company from the Central and Eastern European region, we knew from the beginning that it would be a challenge to make a name for ourselves. We have concentrated on personal communication and problem solving to build close relationships with our clients , and their feedback has given us a major advantage.

How have you and your clients found the move into VR?

András

There is a massive amount of hype around VR — but when it comes to specific technological solutions, clients have little experience. Thanks to our solid pipeline and previous experience, we can create satisfying solutions to vague ideas. We start a dialogue with our clients so they understand the basics, such as the difference between static and real-time, and we find out their needs and priorities.

We dove in at the deep end of VR — but we always feel comfortable with challenging situations.

András Káldos, Brick Visual

We quickly realized that VR is a service rather than a product, because beyond production you have to take time on client education and making the content user-friendly. The move from our side was a reaction to client demand. We dove in at the deep end of VR — but we always feel comfortable with challenging situations.

What are the advantages of VR for arch viz?

Attila

Architecture is fundamentally spatial, and all its visualizations, from facade plans to animations, have been two-dimensional so far. VR is the first medium that can transmit the spatial qualities of a building.

Now the industry must figure out the means of communication in a new medium which is a dimension bigger than familiar platforms or products.

We also focus on the business perspective of VR, and collaborate with clients to figure out how they can effectively utilize this new technology. We are also working on an application that focuses on the needs of the day-to-day user. More details will be revealed soon.

What do you have to do differently to create a VR experience?

Attila

In real-time VR the answer, in short, is: everything! The traditional arch viz pipeline and the game-developer pipeline necessary for real-time VR are two fundamentally different processes. Part of the industry is currently working on minimizing or eliminating the distance between the two. We are working on the same issue on an experimental level, but we are far from selling a large volume of real-time products. Fortunately, market demand is still pretty small — but this will change in the near future.

With panoramic 360, we can implement a traditional arch viz approach in VR. With time and software development, real-time content creation will simplify and become similar to a normal arch viz pipeline. On the other hand, static VR will accelerate to the point of becoming interactive.

VR is transforming into a service in which the client wants to play an interactive role. This is another big challenge: What is the client going to do with your VR content? How do you deliver it, how do they install it, how do you manage technical support?

How does V-Ray help you create VR projects?

Attila

At Brick we have been using V-Ray for 3ds Max from day one, and we always try to update to the newest version as soon as possible. The new features and improvements help us render faster and efficiently handle complex scenes, which is critical in large VR projects. In the early phase of a project, GPU rendering is used for previews, or at client meetings, where we can change the angle and the lighting in no time.

It's exciting to see V-Ray integrated into major architecture software and game engines. That is a game changer for the arch viz industry, and it will bring faster and easier workflows among these applications.

Could you talk me through a recent VR project?

Andras

We recently had a big VR project, an office building in Silicon Valley, from Gensler. In the first phase of the project, we had to deliver a series of still images with our traditional workflow. We modelled the necessary parts for the images, rendered them, and did the post-production in Photoshop as usual.

But for the second part of the project, we had to create six VR images from interior and exterior viewpoints. The first challenge here was that many of the angles were from higher levels, or on the rooftop. Because there was no way to get stereo panoramic photos, we had to go back and model the entire view, which was about 30 km in the end. We divided this environment into three parts.

For the really distant areas, we used CAD data for the buildings, roads and vegetation, and Google Earth for the ground and the mountains. We used Google as a reference for closer elements and neighborhood buildings, and we modelled and textured everything in as much detail as needed.

What were the biggest challenges, and how did you overcome them?

Attila

We kept everything in separate files, so for a certain VR scene we only loaded the parts of the building and the interiors that we needed. Even with this optimized workflow and very powerful workstations, the file loading and saving times were enormous, so after a while loading and starting to render an image was a challenge by itself.

The other difficult task was that our client wanted the same quality as our still images, but in stereo VR images. There are no off the shelf solutions for stereo post-production, so we developed an in-house solution. We created a script for After Effects that let us do our post work normally (with masks, adjustments, etc.) for one eye, and it would also sync these adjustments to the other eye. The final output goes straight to our VR application, or any kind of VR device.

Our 3D pipeline helped us manage these huge files. And Tesseract, our in-house VR post-production custom script, was used in heavy production for the first time.

What are you working on next?

Attila

We aim to make our VR application publicly available through the Google store. And we’re developing a feature called Agent Mode, in which salespeople can guide the user through the VR space.

András

The R&D Team is working on other very interesting projects right now, such as Tesseract. And we’re constantly reinforcing what Brick Visual has achieved so far, and setting new goals.

Could you tell me about the virtual production workflow you presented at IM-ARCH?

Attila

It was an honor to attend the IM-ARCH conference, and a great opportunity to introduce Brick CamTrack, an in in-house experiment that we are proud of. It uses VR tools — but offers a solution for a completely different purpose.

The idea came from an ILM technical sneak peek, and mixed reality tutorials. We wanted to create a solution that would make it easier to take live camera recordings in our studio with the help of Vive tools. The system is based on a Unity application. This broadcasts live Vive Tracker data to 3ds Max, and it handles the signal coming from the actual camera and blends it with the virtual model.

This makes the workflow easier on three levels:

Live keying helps the cameraman and director during recording.

There is no need for post tracking on the footage because the camera movement is captured with the correct accuracy in 3ds Max.

We don’t need to use tracking marks in the green box, which makes keying easier for the post-production.

For a more detailed making of, and a test movie, take a look at this article.